AWS S3 Multipart Upload using AWS CLI

Cloud Enthusiast working as Cloud Infrastructure Consultant. My Hobby is to build and destroy Cloud Projects for Blogs. Love to share my learning journey about DevOps, AWS and Azure.

Subscribe and Follow up with "CloudCubes".

Thank you and Happy Learning !!

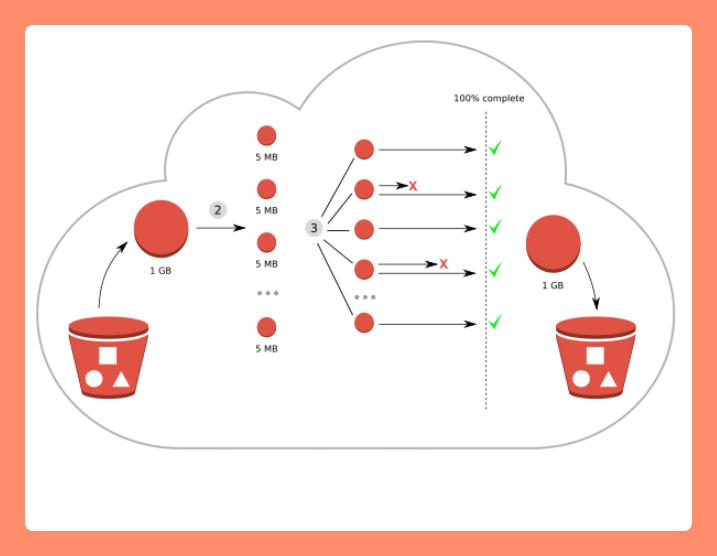

Uploading and copying objects using Multipart Upload allows you to upload a single object as a set of parts.

Each part is a contiguous portion of the object's data. You can upload these object parts independently and in any order. If transmission of any part fails, you can retransmit that part without affecting other parts. After all parts of your object are uploaded, Amazon S3 assembles these parts and creates the object.

In general, when your object size reaches 100 MB, you should consider using multipart uploads instead of uploading the object in a single operation.

Using multipart upload provides the following advantages:

Improved throughput – You can upload parts in parallel to improve throughput.

Quick recovery from any network issues – Smaller part size minimizes the impact of restarting a failed upload due to a network error.

Pause and resume object uploads – You can upload object parts over time. After you initiate a multipart upload, there is no expiry; you must explicitly complete or stop the multipart upload.

Begin an upload before you know the final object size – You can upload an object as you are creating it.

Multipart upload process

Multipart upload is a three-step process:

Step 1. Multipart upload initiation : When you send a request to initiate a multipart upload, Amazon S3 returns a response with an upload ID, which is a unique identifier for your multipart upload. You must include this upload ID whenever you upload parts, list the parts, complete an upload, or stop an upload. If you want to provide any metadata describing the object being uploaded, you must provide it in the request to initiate multipart upload.

Step 2. Parts upload : When uploading a part, in addition to the upload ID, you must specify a part number. You can choose any part number between 1 and 10,000. A part number uniquely identifies a part and its position in the object you are uploading.

Step 3. Multipart upload completion : When you complete a multipart upload, Amazon S3 creates an object by concatenating the parts in ascending order based on the part number. If any object metadata was provided in the initiate multipart upload request, Amazon S3 associates that metadata with the object. After a successful complete request, the parts no longer exist.

Hans-On Tutorial :

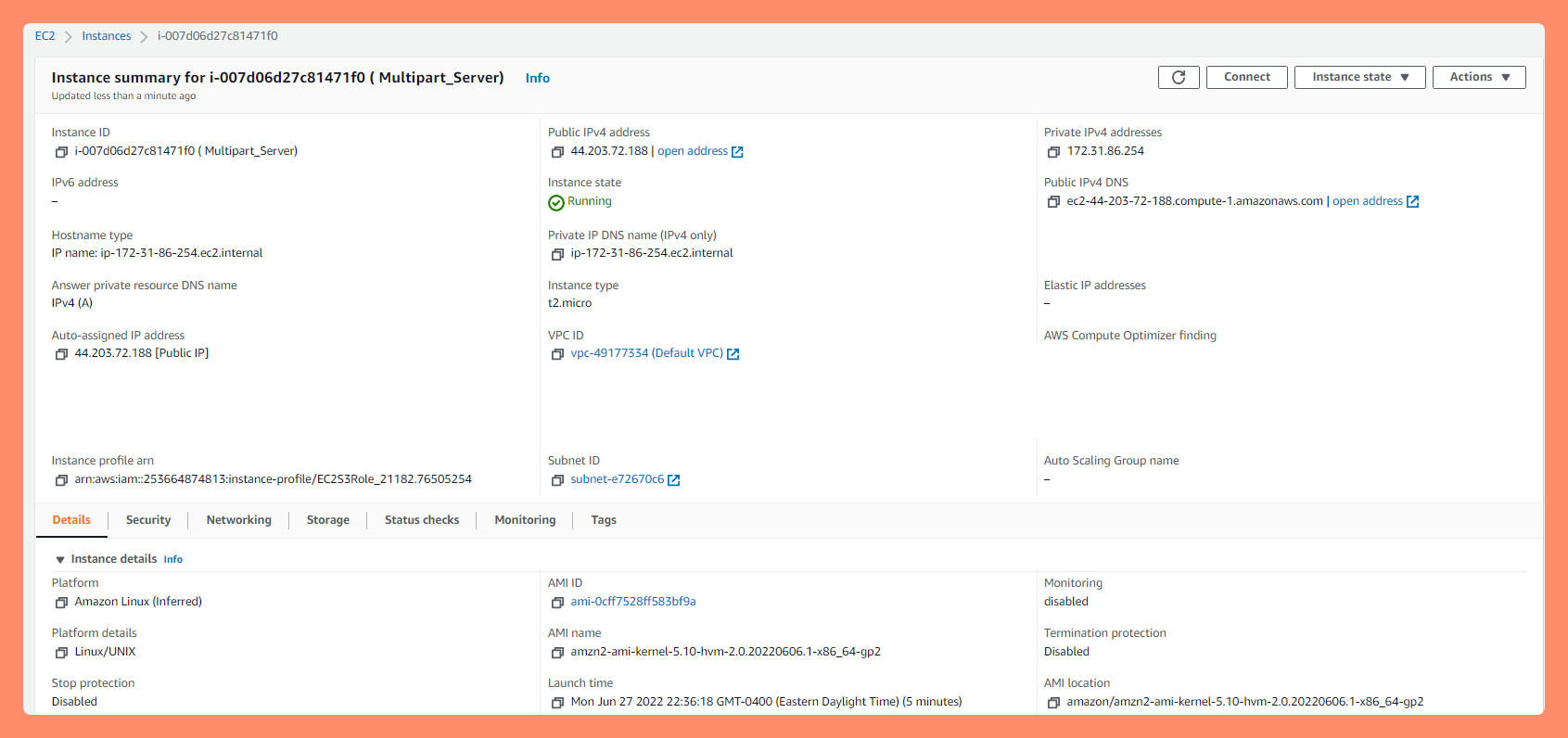

In this practical example, We will upload Big sized Video file (.mp4) from EC2 instance using "s3api" & "aws cli" multipart upload operations to our S3 Bucket. Follow along if you want.

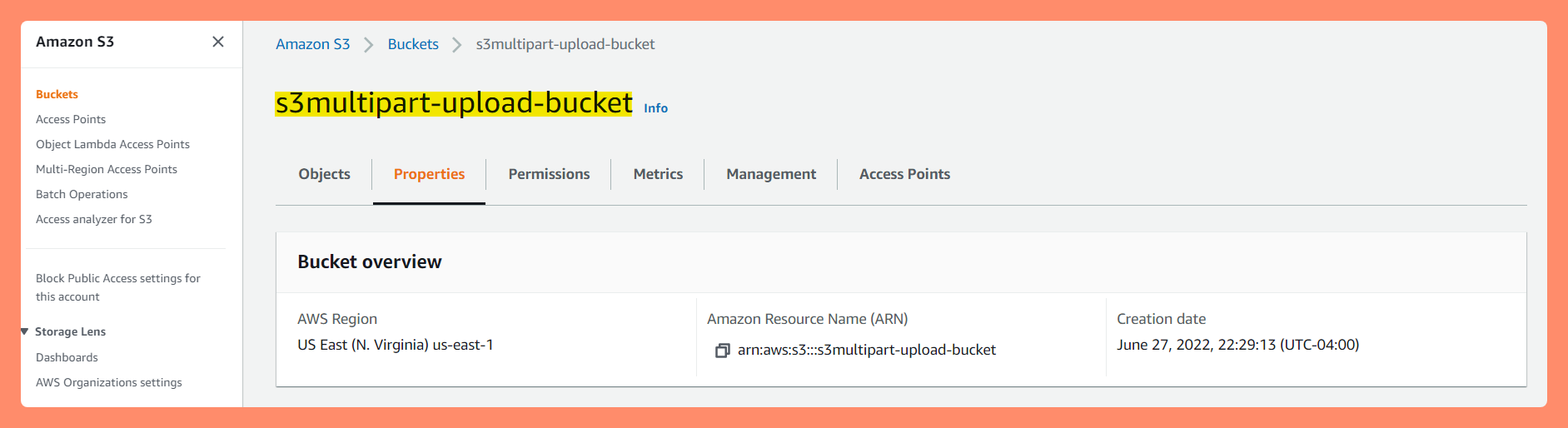

- Create an S3 bucket

Create an EC2 instance

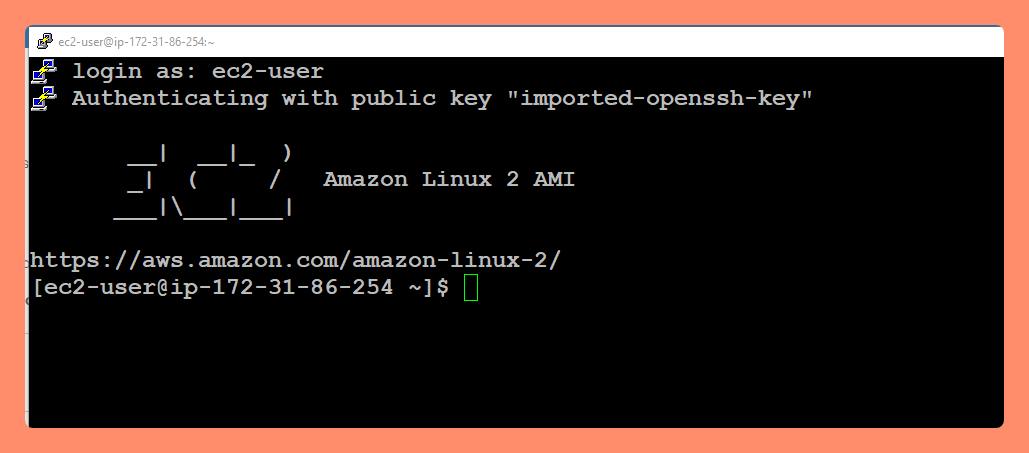

SSH into the EC2 instance

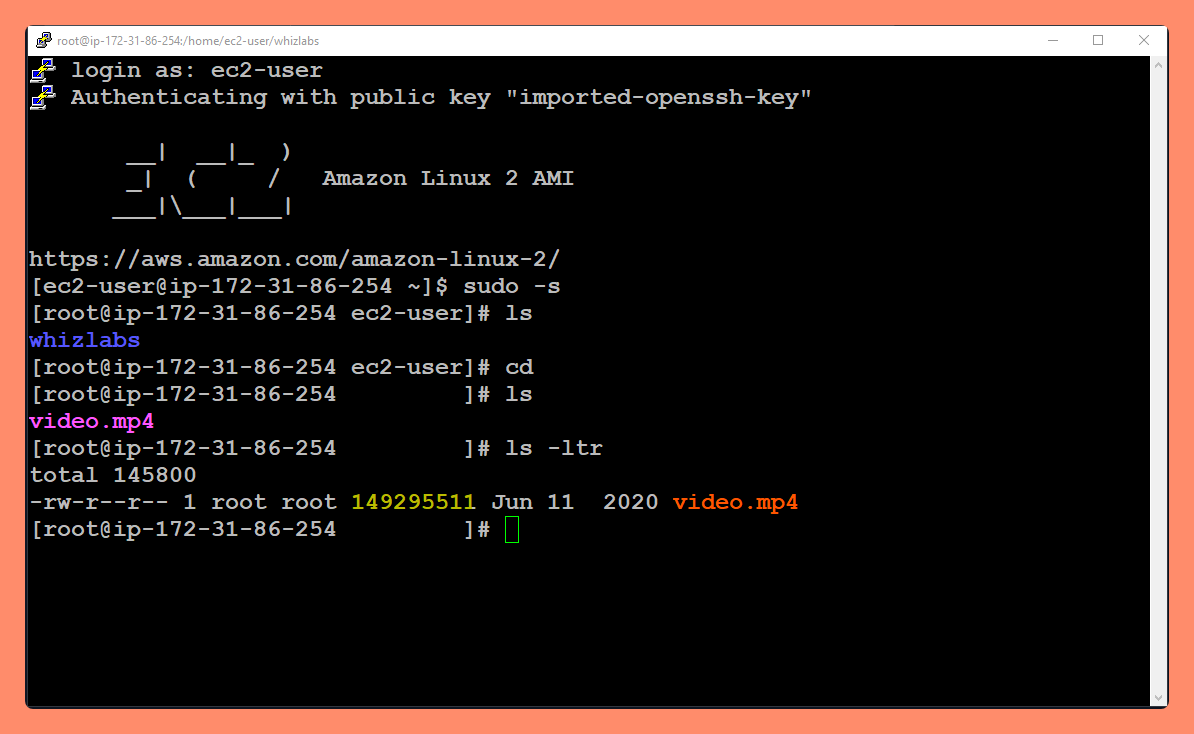

View the original file in EC2 . (Video File in my case. You should have your own file as sample file to upload)

Note: This file is ~143 MB in size, so we'll use the multipart feature to upload this file to s3.

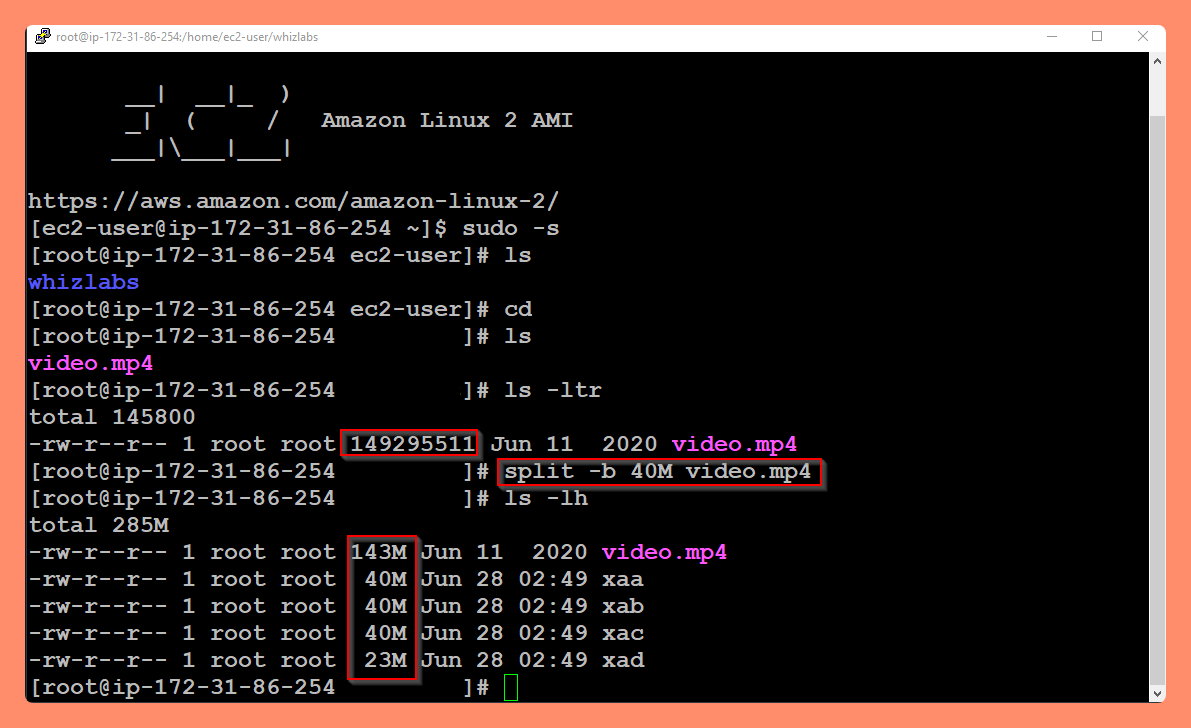

Note: This file is ~143 MB in size, so we'll use the multipart feature to upload this file to s3.Split the file into multiple parts The split command will split a large file into many pieces (chunks) based on the option.

split [options] [filename] split -b 40M video.mp4Here we are dividing the 143 MB file into 40MB chunks. View the chunked files

Here "xaa" and "xad" are the chunked files (parts) that have been renamed alphabetically. Each file is 40MB in size but except the last one. The number of chunks depends on the size of your original file and the byte value used to partition the chunks.

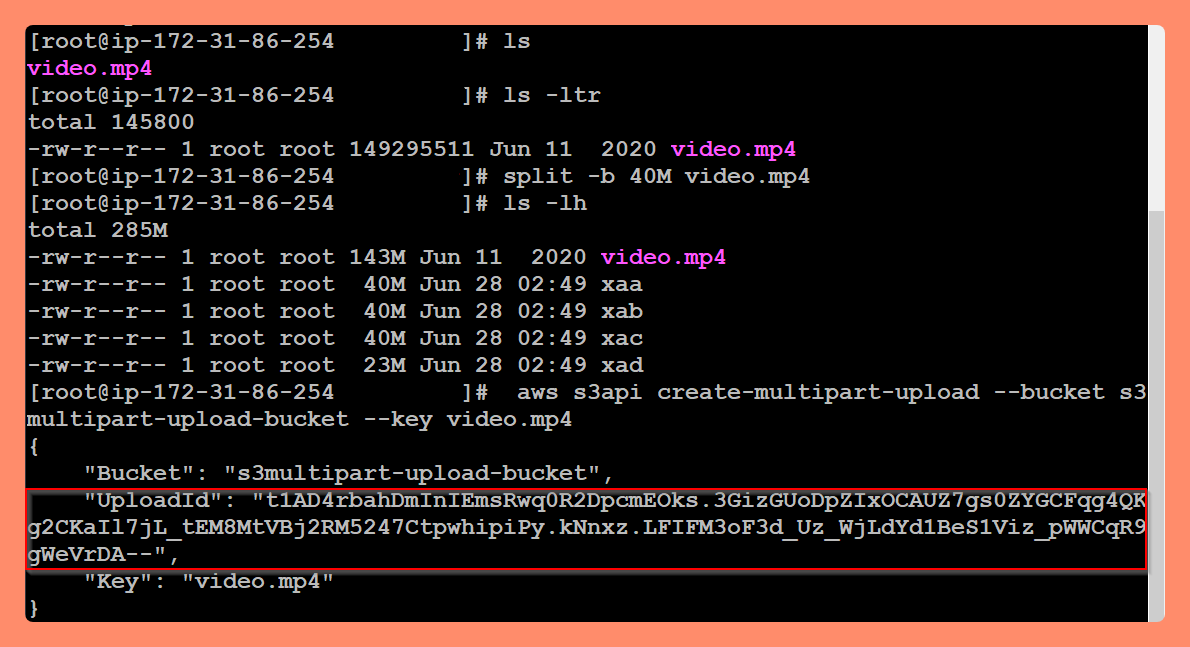

Here "xaa" and "xad" are the chunked files (parts) that have been renamed alphabetically. Each file is 40MB in size but except the last one. The number of chunks depends on the size of your original file and the byte value used to partition the chunks. Initiate Multipart upload to generate UploadID We are initiating the multipart upload using an AWS CLI command, which will generate a UploadID that will be used later.

aws s3api create-multipart-upload --bucket [Bucket name] --key [original file name]

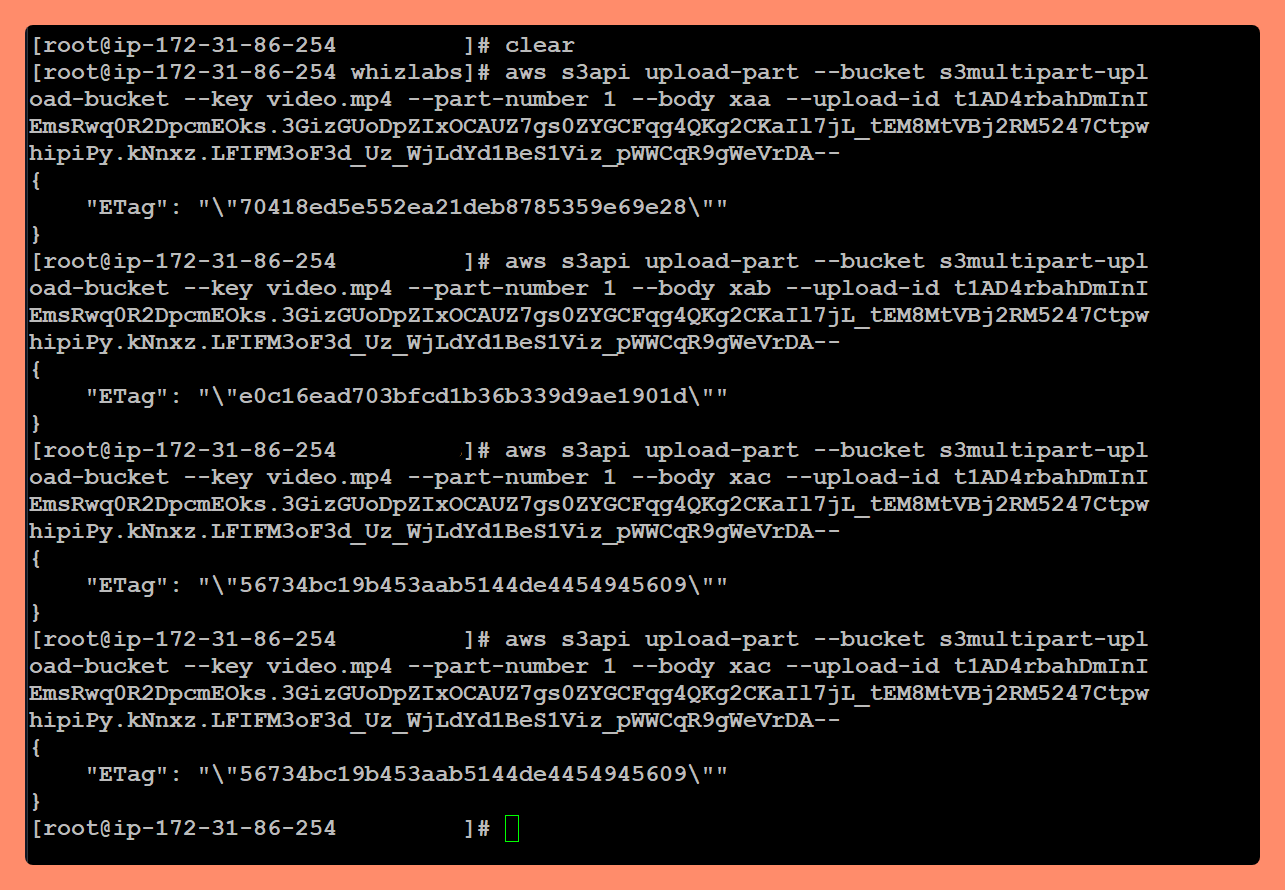

Uploading individual chunks (parts) - hence "Multipart-Upload" Next, we need to upload each file chunk one by one, using the part number. The part number is assigned based on the alphabetic order of the file. Chunk File Names

Part 1 > xaa

Part 2 > xab

Part 3 > xac

Part 4 > xad

Next, we need to upload each file chunk one by one, using the part number. The part number is assigned based on the alphabetic order of the file.aws s3api upload-part --bucket [bucketname] --key [filename] --part-number [number] --body [chunk file name] --upload-id [id] Note: Copy the ETag id and Part number for later use.

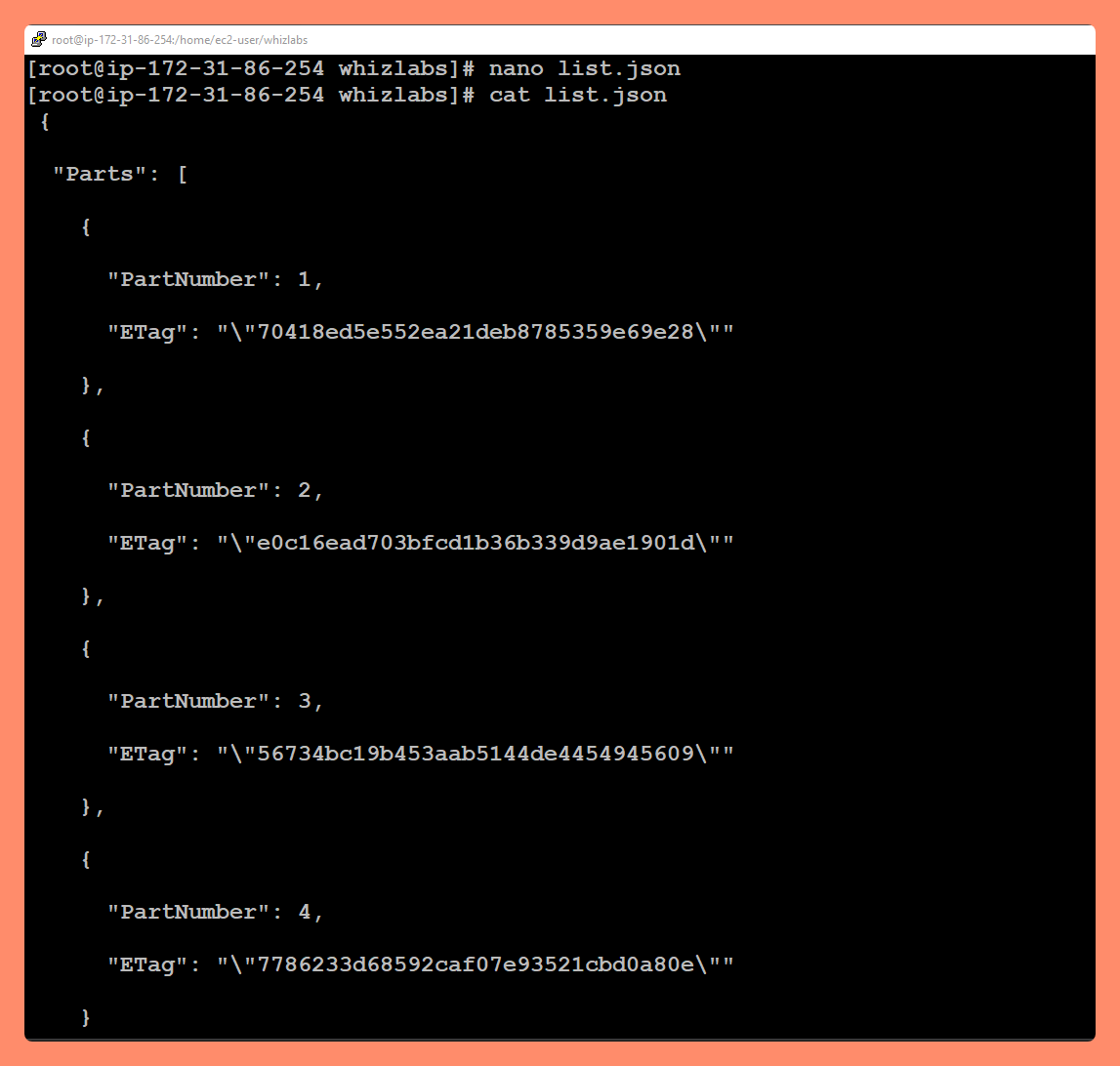

Note: Copy the ETag id and Part number for later use. Create a Multipart JSON file Copy the below JSON Script and paste it into the list.json file. Use Nano or Vi Editor.

Note: Replace the ETag ID according to the part number, which you received after uploading each chunk.

Note: Replace the ETag ID according to the part number, which you received after uploading each chunk. { "Parts": [ { "ETag": "e868e0f4719e394144ef36531ee6824c", "PartNumber": 1 }, { "ETag": "6bb2b12753d66fe86da4998aa33fffb0", "PartNumber": 2 }, { "ETag": "d0a0112e841abec9c9ec83406f0159c8", "PartNumber": 3 } ] }Complete the Multipart Upload

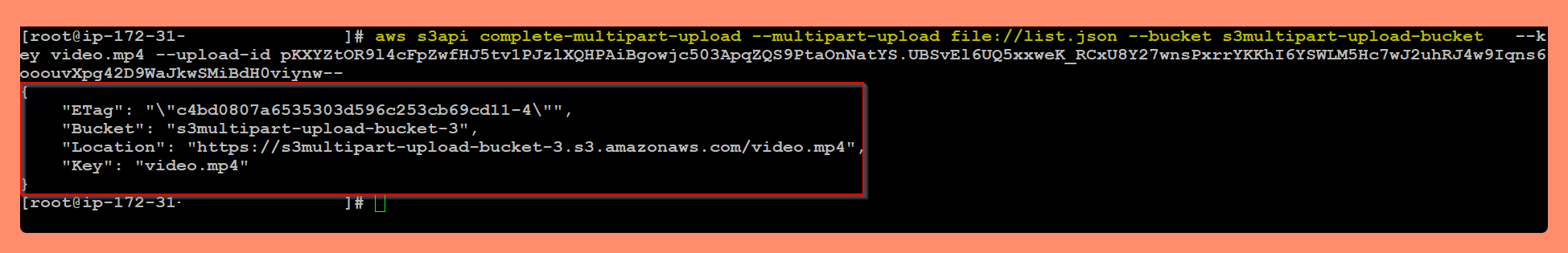

Now we are going to join all the chunks(parts) together with the help of the JSON file we created in the above step and and "s3api complete-multipar-upload" command.aws s3api complete-multipart-upload --multipart-upload [json file link] --bucket [upload bucket name] --key [original data file name] --upload-id [upload id]Replace the example above with your bucket name. Replace the Upload-Id value with your upload id.

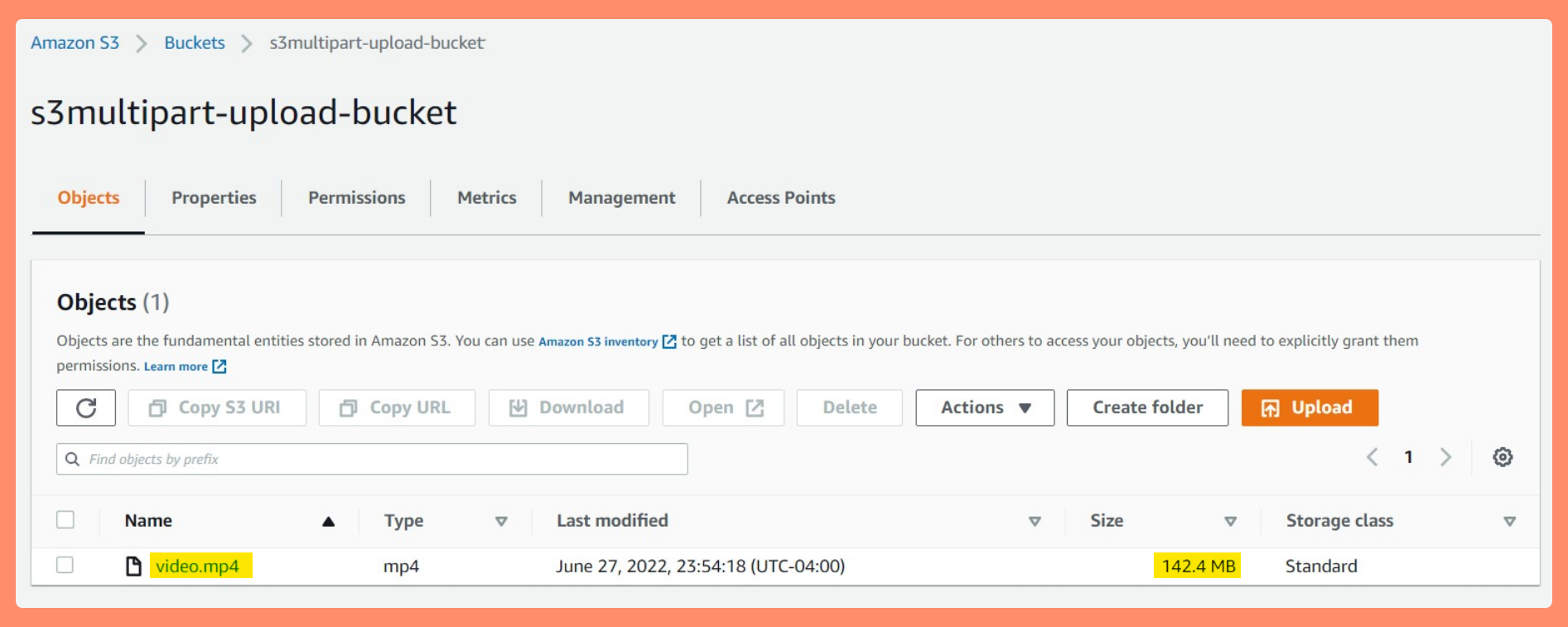

View the file in the S3 Bucket we created.

A single Video file of 143 MB size is there. Which varifies our Multipart Upload from AWS CLI from our EC2 Instance went Successfully and S3 assembled our multiple parts back into the original file.

We have successfully split the oversize Size Object File into multiple individual chunks and received assembled object file on the s3 bucket.

Reference : AWS Documentation on Multipart-Upoload

perform a multipart upload of a file to Amazon S3

If you want to challenge further, Try this, Using AWS for Multi-instance, Multi-part Uploads

Thank you for reading and/or following along with the Blog.

Happy Learning.

Like and Follow for more Azure and AWS Content.

Regards, Jineshkumar Patel